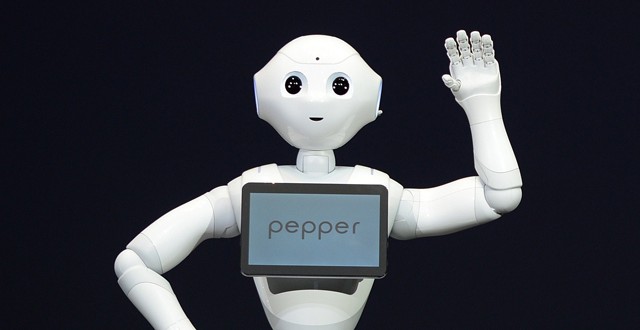

The Japanese company Softbank recently said that they plan on selling Pepper humanoid robots to consumers starting in February. Their $2.000 robot will work as a babysitter, nurse, and emergency medical worker. Pepper will also be able to respond to human emotions, according to Software’s CEO Masayoshi Son. More and more companies seem to be developing an interest for robotics. Softbank made their intentions clear about three or four years ago when they partnered with Aldebaran, a company that’s been building smaller humanoid robots for a while now. They were mostly demonstrational, but Pepper is intended to be a very real consumer robot.

The problem with a lot of the humanoid robots we’ve seen thus far, including Honda’s Asimo is that they never make it to the market. Earlier this year we’ve seen an incredible demonstration by the bipedal Asimo, but they still couldn’t tell if it will ever come to be used in homes. It would also probably cost a lot more money than Pepper. If this really only ends up being $2.000 that would be incredible! A lot of people are skeptical given that it takes a lot of resources to build robotics at this level. It has a lot of sensors in the hand, in the head, and it has face recognition as well. Pepper also features voice recognition, it’s cloud connected and has artificial intelligence. It can do a whole lot of stuff that is typically a lot more than $2.000 . The robot is about 4-feet tall and weighs about 60 pounds.

It’s extremely exciting to see something like this, but we should probably take it with a grain of salt. Masayoshi Son tends to be somewhat bombastic so let’s wait until 2015 and see if Pepper appears at least for consumers in Japan. The country is always leading in robotics because their population is aging faster than that of the U.S. for example. So they need home health care workers more than others. We don’t yet know how much weight it can hold so we can’t be sure how good of a babysitter it will end up being. Son says Pepper will be affectionate and will respond to human emotions. It has something called an emotional engine. The robot uses facial recognition to tell the difference between a smile and a frown, and vocal intonation to tell if you’re happy or sad. It will use the cloud to get smarter over time so it might end being relatively human-like at one point.

Load the Game Video Games, Reviews, Game News, Game Reviews & Game Video Trailers

Load the Game Video Games, Reviews, Game News, Game Reviews & Game Video Trailers